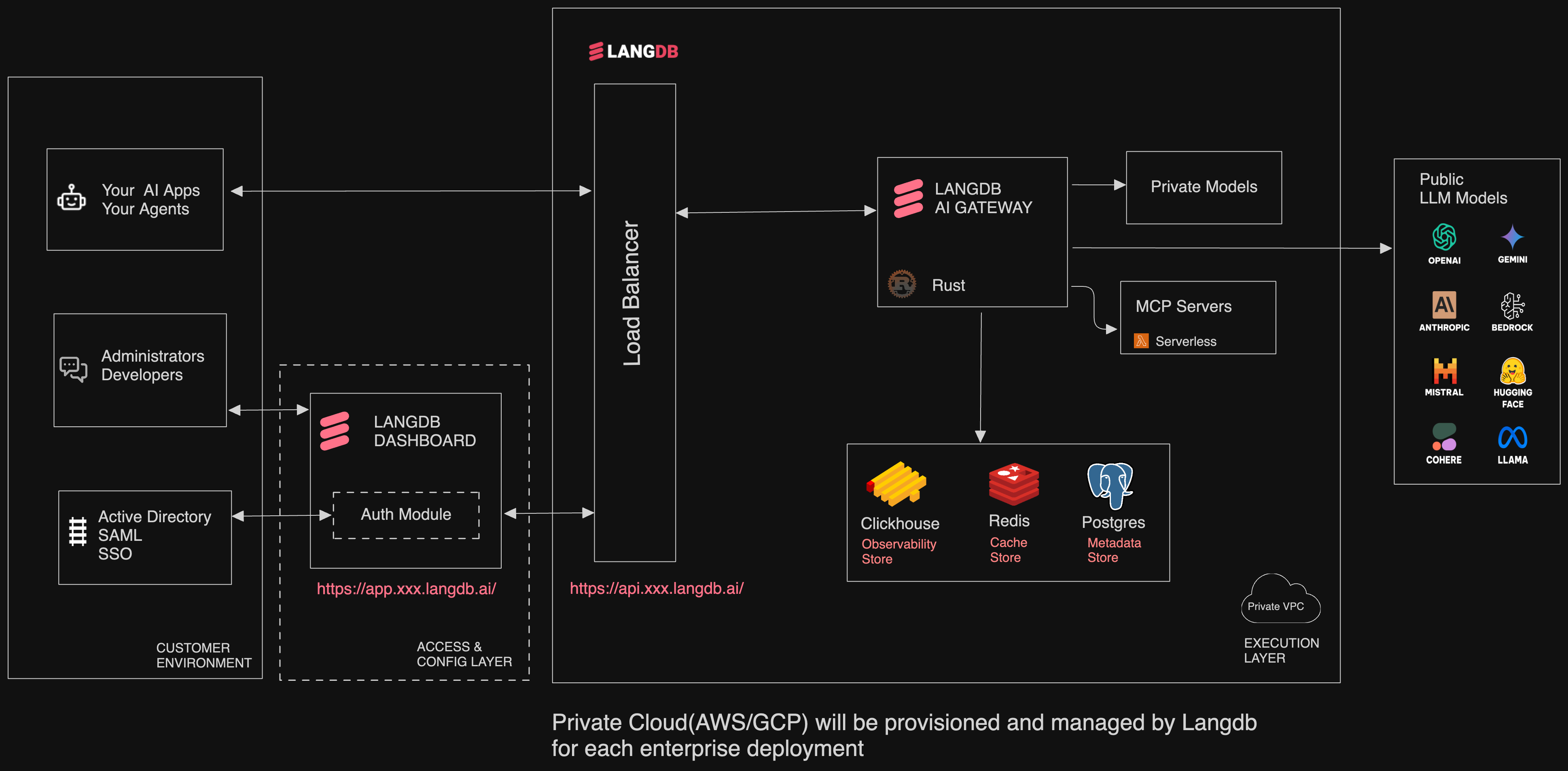

Architecture Overview

This page describes the core architecture of the LangDB AI Gateway, a unified platform for interfacing with a wide variety of Large Language Models (LLMs) and building agentic applications with enterprise-grade observability, cost control, and scalability, MCP features and more.

Core Components

| Component | Purpose / Description | Enterprise Features / Notes |

|---|---|---|

| AI Gateway | Unified interface to 300+ LLMs using the OpenAI API format. Built-in observability and tracing. | Multi-tenancy, advanced cost control, and rate limiting. Contact LangDB for access. |

| Metadata Store (PostgreSQL) | Stores metadata related to API usage, configurations, and more. | For scalable/multi-tenant deployments, use managed PostgreSQL (e.g., AWS RDS, GCP Cloud SQL). |

Cache Store (Redis) | Implements rolling cost control and rate limiting for API usage. | Enterprise version supports Redis integration for cost control and rate limiting. |

| Observability & Analytics Store (ClickHouse) | Provides observability by storing and analyzing traces/logs. Supports OpenTelemetry. | For large-scale deployments, use ClickHouse Cloud. Traces stored in langdb.traces table. |

Note:

- Metadata Store: Powered by PostgreSQL (consider AWS RDS, GCP Cloud SQL for enterprise)

- Cache Store: Powered by Redis (enterprise only)

- Observability & Analytics Store: Powered by ClickHouse (consider ClickHouse Cloud for scale)

Environment Overview

LangDB provisions a dedicated environment for each tenant. This environment is isolated per tenant and is set up in a separate AWS account or GCP project, managed by LangDB. Customers connect securely to their provisioned environment from their own VPCs, ensuring strong network isolation and security.

LangDB itself operates a thin, shared public cloud environment (the "control plane") that is primarily responsible for:

- Provisioning new tenant environments

- Managing access control and user/tenant provisioning

- Handling external federated account connections (e.g., SSO)

- Hosting the LangDB Dashboard frontend application for configuration, monitoring, and management

All operational workloads, data storage, and LLM/MCP execution occur within the tenant-specific environment. The shared LangDB cloud is not involved in data processing or LLM execution, but only in provisioning, access management, and dashboard hosting.

Customer Environment

- Integrates with customer identity providers (Active Directory, SAML, SSO).

- Users (AI Apps, Agents, Administrators, Developers) interact with LangDB via secure endpoints.

LangDB Dashboard

- Centralized dashboard for configuration, monitoring, and management.

- Handles user and tenant provisioning, access control, and external federated account connections.

- All provisioning and access is centrally managed via LangDB Cloud and Dashboard.

Tenant Environment (Execution Layer)

- Each tenant (enterprise deployment) is provisioned in a dedicated AWS account or GCP project.

- Communication between tenant environment and LangDB is secured and managed.

- Provisioning is automated via Terraform.

Store Descriptions

Metadata Store (PostgreSQL)

Stores all configuration and metadata required for operation, including:

- Virtual models

- Virtual MCP servers

- Projects

- Guardrails

- Routers

Redis (Cache Store)

Used for fast, in-memory operations related to:

- Rate limiting & cost control

- LLM usage tracking

- MCP usage tracking

ClickHouse (Analytics & Observability Store)

Stores analytics and observability data:

- Traces (API calls, LLM invocations, etc.)

- Metrics (performance, usage, etc.)

User and Tenant Provisioning

- User and tenant provisioning is centrally controlled via LangDB Cloud and Dashboard.

- External federated accounts (e.g., enterprise SSO) can be connected to LangDB Cloud for seamless access management.

Data Retention

- Data retention policies mainly apply to observability data (traces, metrics) stored in ClickHouse.

- Retention is enforced per subscription tier; traces are automatically cleared after the retention period expires.

MCP Server Deployment

- MCP servers are deployed in a serverless fashion using AWS Lambda or GCP Cloudrun for scalability and cost efficiency.