Deploying on AWS Cloud

AWS Deployment

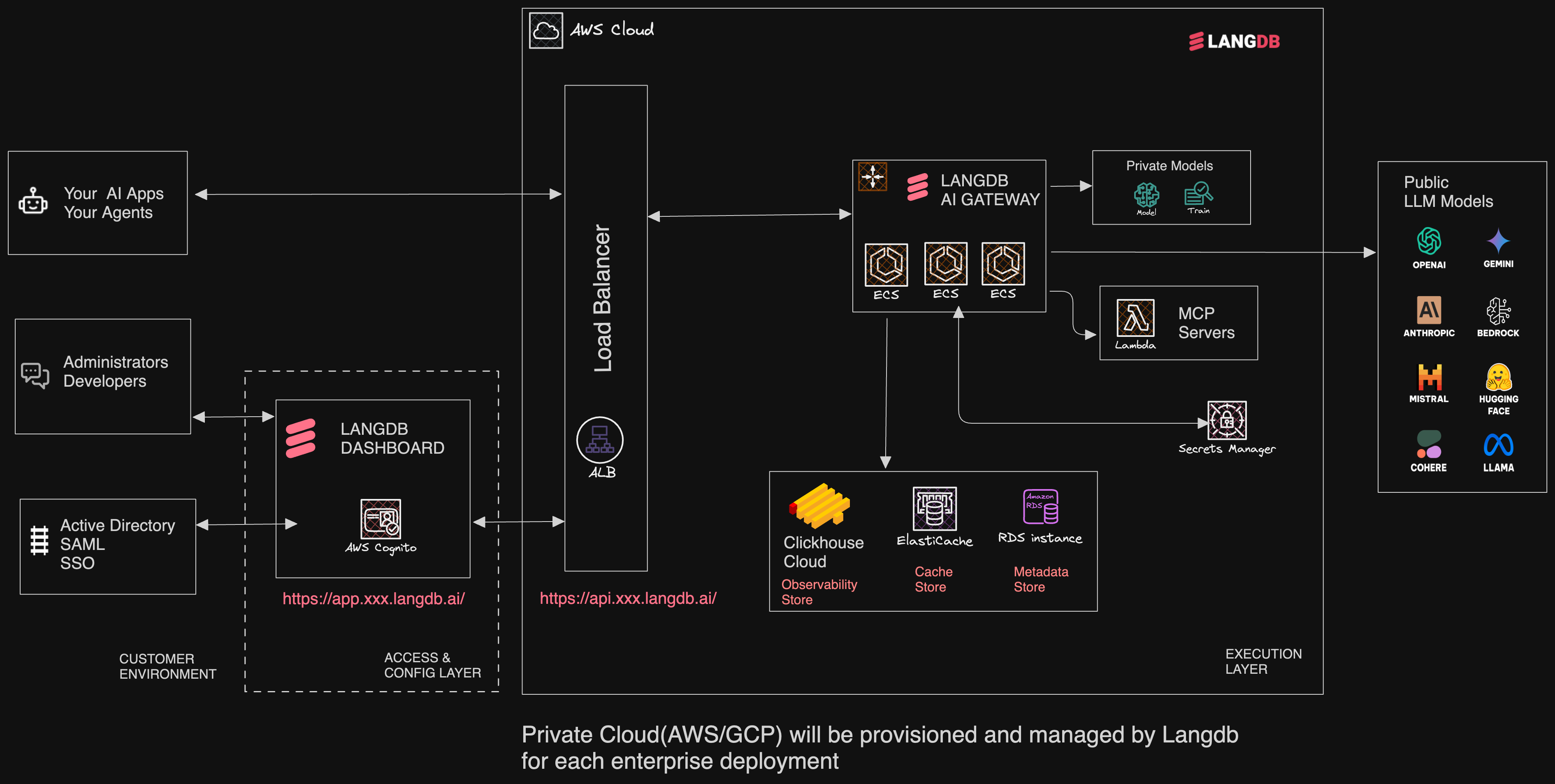

This section describes how AI Gateway and its supporting components on AWS, ensuring enterprise-grade scalability, observability, and security.

Software Components

| Component | Purpose / Description | AWS Service | Scaling |

|---|---|---|---|

| LLM Gateway | Unified interface to 300+ LLMs using the OpenAI API format. Built-in observability and tracing. | Amazon ECS (Elastic Container Service) | ECS Auto Scaling based on CPU/memory or custom CloudWatch metrics. |

| Metadata Store (PostgreSQL) | Stores metadata related to API usage, configurations, and more. | Amazon RDS (PostgreSQL) | Vertical scaling (instance size), Multi-AZ support. |

Cache Store | Implements rolling cost control and rate limiting for API usage. | Amazon ElastiCache (Redis) | Scale by adding shards/replicas, Multi-AZ support. |

| Observability & Analytics Store (ClickHouse) | Provides observability by storing and analyzing traces/logs. Supports OpenTelemetry. | ClickHouse Cloud (external) | Scales independently; ensure sufficient network throughput for trace/log ingestion. |

| Load Balancing | Distributes incoming traffic to ECS tasks, enabling high availability and SSL termination. | Amazon ALB (Application Load Balancer) | Scales automatically to handle incoming traffic; supports multi-AZ deployments. |

Architecture Overview

The LangDB service is deployed in AWS with a robust, scalable architecture designed for high availability, security, and performance. The system is built using AWS managed services to minimize operational overhead while maintaining full control over the application.

Components

Networking and Entry Points

- AWS Region: All resources are deployed within a single AWS region for low-latency communication

- VPC: A dedicated Virtual Private Cloud isolates the application resources

- ALB: Application Load Balancer serves as the entry point, routing requests from

https://api.{region}.langdb.aito the appropriate services

Core Services

- ECS Cluster: Container orchestration for the LangDB service

- Multiple LangDB service instances distributed across availability zones for redundancy

- Auto-scaling capabilities based on load

- Containerized deployment for consistency across environments

Data Storage

- RDS: Managed relational database service for persistent storage. Dedicated storage for metadata

- ElastiCache (Redis) Cluster: In-memory caching layer

- Used for cache and cost control

- Multiple nodes for high availability

- Clickhouse Cloud: Analytics database for high-performance data processing

- Deployed in the same AWS region but outside the VPC

- Managed service for analytical queries and data warehousing

Authentication & Security

- Cognito: User authentication and identity management

- Lambda: Serverless functions for authentication workflows

- SES: Simple Email Service for email communications related to authentication

Secrets Management

- Secrets Vault: AWS Secrets Manager for secure storage of

- Provider keys

- Other sensitive credentials

Data Flow

- Client requests hit the ALB via

https://api.{region}.langdb.ai - ALB routes requests to available LangDB service instances in the ECS cluster

- LangDB services interact with:

- RDS for persistent data

- ElastiCache for caching, cost control and rate limiting

- Metadata Storage for metadata operations

- Clickhouse Cloud for analytics and data warehousing

- Authentication is handled through Cognito, with Lambda functions for custom authentication flows

- Sensitive information is securely retrieved from Secrets Manager as needed

Security Considerations

- All components except Clickhouse Cloud are contained within a VPC for network isolation

- Secure connections to Clickhouse Cloud are established from within the VPC

- Authentication is managed through AWS Cognito

- Secrets are stored in AWS Secrets Manager

- All communication between services uses encryption in transit

Operational Benefits

- Scalability: The architecture supports horizontal scaling of LangDB service instances

- High Availability: Multiple instances across availability zones

- Managed Services: Leveraging AWS managed services reduces operational overhead

Deployment Process

LangDB infrastructure is deployed and managed using Terraform, providing infrastructure-as-code capabilities with the following benefits:

Terraform Architecture

- Modular Structure: The deployment code is organized into reusable Terraform modules that encapsulate specific infrastructure components (networking, compute, storage, etc.)

- Environment-Specific Variables: Using

.tfvarsfiles to manage environment-specific configurations (dev, staging, prod) - State Management: Terraform state is stored remotely to enable collaboration and version control

Deployment Workflow

- Configuration Management: Environment-specific variables are defined in

.tfvarsfiles - Resource Provisioning: Terraform creates and configures all AWS resources, including:

- VPC and networking components

- Fargate instances and container configurations

- Postgres databases and Redis clusters

- Authentication services and Lambda functions

- Secrets Manager entries and access controls

- Dependency Management: Terraform handles resource dependencies, ensuring proper creation order

Maintenance & Updates

Ongoing infrastructure maintenance is managed through Terraform:

- Scaling resources up/down based on demand

- Applying security patches and updates

- Modifying configurations for performance optimization

- Adding new resources or services as needed