Working with Models

Managing and Adding New Models

The platform provides a flexible management API that allows you to publish and manage machine learning models at both the tenant and project levels. This enables organizations to control access and visibility of models, supporting both public and private use cases.

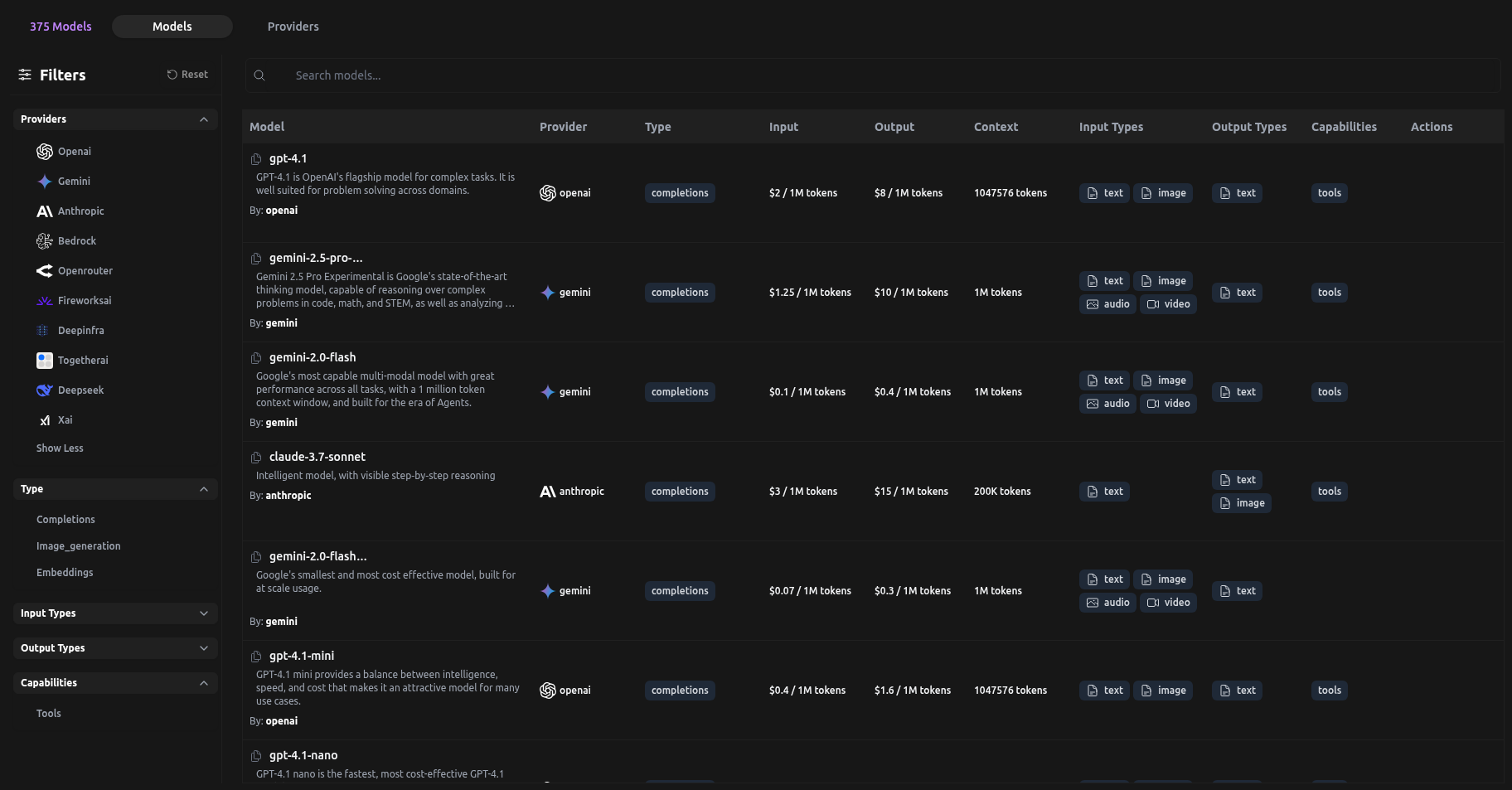

Model Listing on LangDB

Model Types

- Public Models:

- Can be added without specifying a project ID.

- Accessible to all users on this deployment.

- In enterprise deployments, public models are added monthly.

- Specific requests for models can be made talking to our support.

- Private Models:

- Require a

project_idand aprovider_idfor a known provider with a pre-configured secret. - Access is restricted to the specified project and provider.

- Require a

Model Parameters

When publishing a model, you can specify:

- Request/Response Mapping:

- By default, models are expected to be OpenAI-compatible.

- You can also specify custom request/response processors using dynamic scripts (see 'Coming Soon' below).

- Model Parameters Schema:

- A JSON schema describing the parameters that can be sent with requests to the model.

Management API to Publish New Models

The management API allows you to register new models with the platform. Below is an example of how to use the API to publish a new model.

Sample cURL Request

curl -X POST https://api.xxx.langdb.ai/admin/models \

-H "Authorization: Bearer <your_token>" \

-H "X-Admin-Key: <admin_key>"\

-H "Content-Type: application/json" \

-d '{

"model_name": "my-model",

"description" "Description of the LLM Model",

"provider_id": "123e4567-e89b-12d3-a456-426614174000",

"project_id": "<project_id>",

"public": false,

"request_response_mapping": "openai-compatible", // or custom script

"model_type": "completions",

"input_token_price": "0.00001",

"output_token_price": "0.00003",

"context_size": 128000,

"capabilities": ["tools"],

"input_types": ["text", "image"],

"output_types": ["text", "image"],

"tags": [],

"owner_name": "openai",

"priority": 0,

"model_name_in_provider": "my-model-v1.2",

"parameters": {

"top_k":{

"min":0,

"step":1,

"type":"int",

"default":0,

"required":false,

"description":"Limits the token sampling to only the top K tokens. A value of 0 disables this setting, allowing the model to consider all tokens."

},

"top_p":{

"max":1,

"min":0,

"step":0.05,

"type":"float",

"default":1,

"required":false,

"description":"An alternative to sampling with temperature, called nucleus sampling, where the model considers the results of the tokens with top_p probability mass. So 0.1 means only the tokens comprising the top 10% probability mass are considered. We generally recommend altering this or temperature but not both."

},

}

}'

API properties

| Field | Type | Description |

|---|---|---|

model_name | String | The display name of the model |

description | String | A detailed description of the model's capabilities and use cases |

provider_info_id | UUID | The UUID of the provider that offers this model |

project_id | UUID | Which project this model belongs to |

public | Boolean | Whether the model is publicly discoverable or private |

request_response_mapping | String | "openai-compatible" or a custom mapping script |

model_type | String | The type of model (e.g., "completions", "image", "embedding") |

owner_name | String | The name of the model's owner or creator |

priority | i32 | Priority level for the model in listings (higher numbers indicate higher priority) |

input_token_price | Nullable float | Price per input token |

output_token_price | Nullable float | Price per output token |

context_size | Nullable u32 | Maximum context window size in tokens |

capabilities | String[] | List of model capabilities (e.g., "tools") |

input_types | String[] | Supported input formats (e.g., "text", "image", "audio") |

output_types | String[] | Supported output formats (e.g., "text", "image", "audio") |

tags | String[] | Classification tags for the model |

type_prices | Map<String, float> | JSON string containing prices for different usage types (used for image generation model pricing) |

mp_price | Nullable float | Price by megapixel (used for image generation model pricing) |

model_name_in_provider | String | The model's identifier in the provider's system |

parameters | Map<String, Map<String, any>> | Additional configuration parameters as JSON |

Checkout the full API Specification: POST /admin/models\

Usage of API

- Replace

<platform-url>,<your_token>, and<project_id>with your actual values. - Set

publictotruefor public models (omitproject_idandprovider_id), orfalsefor private models. - The

parameters_schemafield allows you to define the expected parameters for your model.

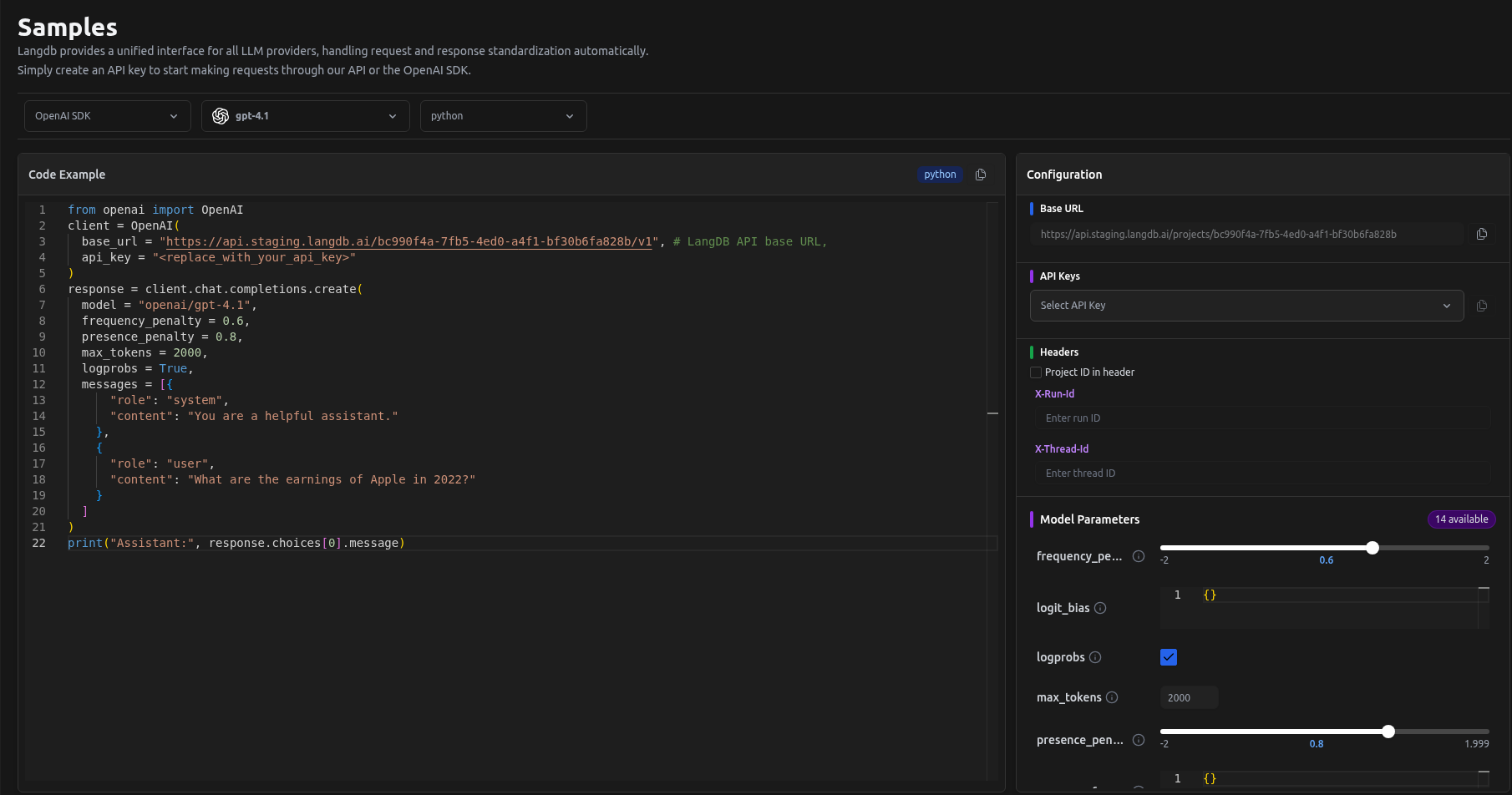

Setting Parameters for a sample request on LangDB

Dynamic Request/Response Mapping (Coming Soon)

The platform will soon support dynamic request/response mapping using custom scripts. This feature will allow you to define how requests are transformed before being sent to the model, and how responses are processed before being returned to the client. This will enable support for a wide variety of model APIs and custom workflows.

Stay tuned!