Connecting LLMs to the Web with Real-Time Search Tools

Traditional LLMs rely on static training data, making them prone to outdated responses, hallucinations, and missing critical context. LangDB's built-in Search tool solves this by fetching real-time data, improving accuracy and contextual relevance.

The Challenge: Stale or Incomplete Knowledge

- Static Corpus: Most LLMs are trained on large datasets, but that training is typically a snapshot in time. Once trained, the model doesn’t automatically update its knowledge.

- Inaccurate or Outdated Information: Without a method to query current data, an LLM may provide answers that were correct at the time of training but are no longer valid.

- Limited Context: Even if the model has relevant data, it might not surface the best context without a guided search mechanism.

Introducing LangDB Search Tool

LangDB’s built-in Search tool addresses these challenges by allowing real-time querying of databases, documents, or external sources:

- On-Demand Queries: Instead of relying solely on the LLM’s training data, the Search tool can fetch the latest information at query time.

- Integrated with LangDB: The search functionality is seamlessly woven into the LangDB, ensuring that developers can use it without additional overhead.

- API-Ready: LangDB’s search tool can be accessed via API too.

Search vs No-Search

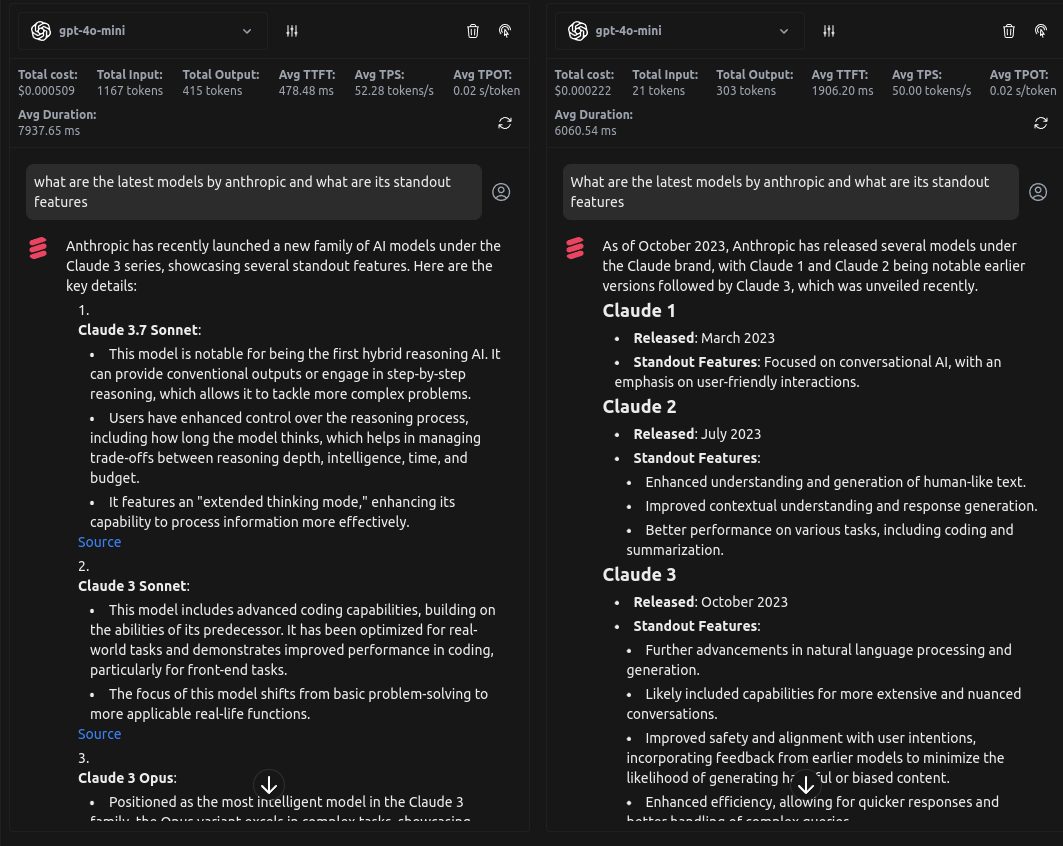

Below is a side-by-side comparison of using LangDB’s search tool versus relying on static model knowledge. The left image shows results with search enabled, pulling real-time, up-to-date information. The right image shows the same query without search, leading to more generic and potentially outdated responses.

| Feature | No Search | With LangDB Search |

|---|---|---|

| Data Freshness | Static, based on training corpus | Dynamic, fetches real-time information |

| Accuracy | Prone to outdated or incorrect responses | Pulls from latest sources, improving reliability |

| Context Depth | Limited by internal model memory | Integrates external sources for better insights |

| Hallucination Risk | Higher | Lower, as responses are backed by retrieved data |

Using Search through API

LangDB’s search tool can be easily integrated via API to fetch real-time data. Below is an example of how to make a simple API call to retrieve live information.

Enable real-time search with LLMs in LangDB with a simple API call:

curl 'https://api.us-east-1.langdb.ai/{LangDB_ProjectID}/v1/chat/completions' \

-H 'authorization: Bearer LangDBAPIKey' \

-H 'Content-Type: application/json' \

-d '{

"model": "openai/gpt-4o-mini",

"mcp_servers": [{ "name": "websearch", "type": "in-memory"}],

"messages": [

{

"role": "user",

"content": "what are the latest models by anthropic and what are its standout features?"

}

]

}'

This allows the LLM to enhance responses with live data, ensuring greater accuracy and relevance.

Conclusion

LangDB’s built-in Search tool eliminates the limitations of static LLMs by integrating real-time web search, ensuring your AI retrieves the most relevant, up-to-date, and accurate information. Whether you're building chatbots, research tools, or automation systems, dynamic search enhances responses with verifiable data, reducing hallucinations and improving decision-making.