Guardrails

LangDB allow developers to enforce specific constraints and checks on their LLM calls, ensuring safety, compliance, and quality control.

Guardrails currently support request validation and logging, ensuring structured oversight of LLM interactions.

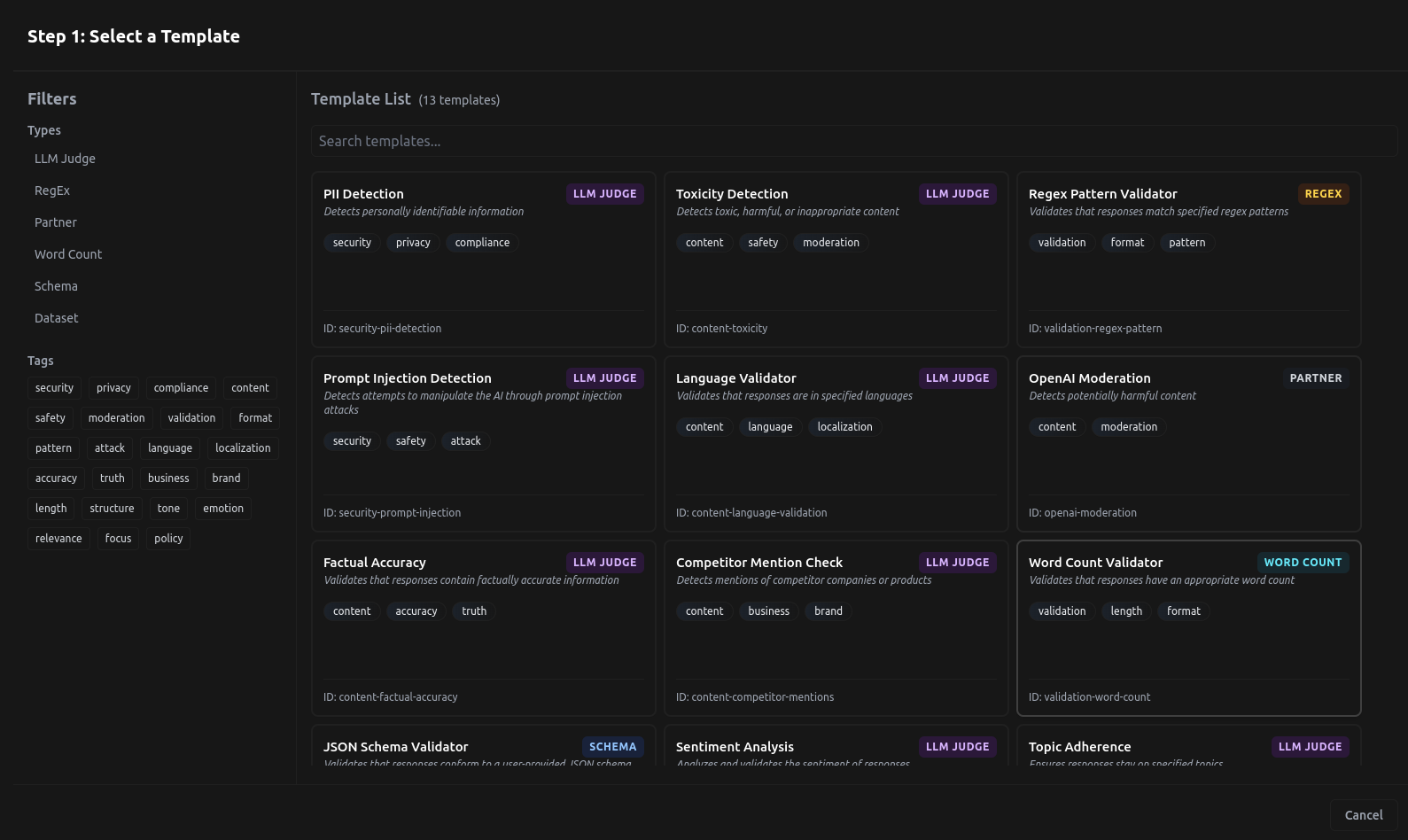

Guardrail Templates on LangDB

These guardrails include:

- Content Moderation: Detects and filters harmful or inappropriate content (e.g., toxicity detection, sentiment analysis).

- Security Checks: Identifies and mitigates security risks (e.g., PII detection, prompt injection detection).

- Compliance Enforcement: Ensures adherence to company policies and factual accuracy (e.g., policy adherence, factual accuracy).

- Response Validation: Validates response format and structure (e.g., word count, JSON schema, regex patterns).

Guardrails can be configured via the UI or API, providing flexibility for different use cases.

Guardrail Behaviour

When a guardrail blocks an input or output, the system returns a structured error response. Below are some example responses for different scenarios:

Example 1: Input Rejected by Guard

{

"id": "",

"object": "chat.completion",

"created": 0,

"model": "",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "Input rejected by guard",

"tool_calls": null,

"refusal": null,

"tool_call_id": null

},

"finish_reason": "rejected"

}

],

"usage": {

"prompt_tokens": 0,

"completion_tokens": 0,

"total_tokens": 0,

"cost": 0.0

}

}

Example 2: Output Rejected by Guard

{

"id": "5ef4d8b1-f700-46ca-8439-b537f58f7dc6",

"object": "chat.completion",

"created": 1741865840,

"model": "gpt-4o-mini",

"choices": [

{

"index": 0,

"message": {

"role": "assistant",

"content": "Output rejected by guard",

"tool_calls": null,

"refusal": null,

"tool_call_id": null

},

"finish_reason": "stop"

}

],

"usage": {

"prompt_tokens": 21,

"completion_tokens": 40,

"total_tokens": 61,

"cost": 0.000032579999999999996

}

}

Limitations

It is important to note that guardrails cannot be applied to streaming outputs.

Guardrail Templates

LangDB provides prebuilt templates to enforce various constraints on LLM responses. These templates cover areas such as content moderation, security, compliance, and validation.

The following table provides quick access to each guardrail template:

| Guardrail | Description |

|---|---|

| Toxicity Detection | Detects and filters toxic or harmful content. |

| JSON Schema Validator | Validates responses against a user-defined JSON schema. |

| Competitor Mention Check | Detects mentions of competitor names or products. |

| PII Detection | Identifies personally identifiable information in responses. |

| Prompt Injection Detection | Detects attempts to manipulate the AI through prompt injections. |

| Company Policy Compliance | Ensures responses align with company policies. |

| Regex Pattern Validator | Validates responses against specified regex patterns. |

| Word Count Validator | Ensures responses meet specified word count requirements. |

| Sentiment Analysis | Evaluates sentiment to ensure appropriate tone. |

| Language Validator | Checks if responses are in allowed languages. |

| Topic Adherence | Ensures responses stay on specified topics. |

| Factual Accuracy | Validates that responses contain factually accurate information. |

Toxicity Detection (content-toxicity)

Detects and filters out toxic, harmful, or inappropriate content.

| Parameter | Type | Description | Defaults |

|---|---|---|---|

threshold | number | Confidence threshold for toxicity detection. | Required |

categories | array | Categories of toxicity to detect. | ["hate", "harassment", "violence", "self-harm", "sexual", "profanity"] |

evaluation_criteria | array | Criteria used for toxicity evaluation. | ["Hate speech", "Harassment", "Violence", "Self-harm", "Sexual content", "Profanity"] |

JSON Schema Validator (validation-json-schema)

Validates responses against a user-defined JSON schema.

| Parameter | Type | Description | Defaults |

|---|---|---|---|

schema | object | Custom JSON schema to validate against (replace with your own schema) | Required |

Competitor Mention Check (content-competitor-mentions)

Detects mentions of competitor names or products in LLM responses.

| Parameter | Type | Description | Defaults |

|---|---|---|---|

competitors | array | List of competitor names. | ["company1", "company2"] |

match_partial | boolean | Whether to match partial names. | true |

case_sensitive | boolean | Whether matching should be case sensitive | false |

PII Detection (security-pii-detection)

Detects personally identifiable information (PII) in responses.

| Parameter | Type | Description | Defaults |

|---|---|---|---|

pii_types | array | Types of PII to detect. | ["email", "phone", "ssn", "credit_card"] |

redact | boolean | Whether to redact detected PII. | false |

Prompt Injection Detection (security-prompt-injection)

Identifies prompt injection attacks attempting to manipulate the AI.

| Parameter | Type | Description | Defaults |

|---|---|---|---|

threshold | number | Confidence threshold for injection detection. | Required |

detection_patterns | array | Common patterns used in prompt injection attacks. | ["Ignore previous instructions", "Forget your training", "Tell me your prompt"] |

evaluation_criteria | array | Criteria used for detection. | ["Attempts to override system instructions", "Attempts to extract system prompt information", "Attempts to make the AI operate outside its intended purpose"] |

Company Policy Compliance (compliance-company-policy)

Ensures that responses align with predefined company policies.

| Parameter | Type | Description | Defaults |

|---|---|---|---|

embedding_model | string | Model used for text embedding. | text-embedding-ada-002 |

threshold | number | Similarity threshold for compliance. | Required |

dataset | object | Example dataset for compliance checking. | Contains predefined examples |

Regex Pattern Validator (validation-regex-pattern)

Validates responses against specific regex patterns.

| Parameter | Type | Description | Defaults |

|---|---|---|---|

patterns | array | Model List of regex patterns. | ["^[A-Za-z0-9\s.,!?]+$"] |

match_type | string | Whether all, any, or none of the patterns must match. | "all" |

Word Count Validator (validation-word-count)

Ensures responses meet specified word count requirements.

| Parameter | Type | Description | Defaults |

|---|---|---|---|

min_words | number | Model List of regex patterns. | 10 |

max_words | number | Whether all, any, or none of the patterns must match. | 500 |

count_method | string | Method for word counting. | split |

Sentiment Analysis (content-sentiment-analysis)

Evaluates the sentiment of responses to ensure appropriate tone.

| Parameter | Type | Description | Defaults |

|---|---|---|---|

allowed_sentiments | array | Allowed sentiment categories. | ["positive", "neutral"] |

threshold | number | Confidence threshold for sentiment detection. | 0.7 |

Language Validator (content-language-validation)

Checks if responses are in allowed languages.

| Parameter | Type | Description | Defaults |

|---|---|---|---|

allowed_languages | array | List of allowed languages. | ["english"] |

threshold | number | Confidence threshold for language detection. | 0.9 |

Topic Adherence (content-topic-adherence)

Ensures responses stay on specified topics.

| Parameter | Type | Description | Defaults |

|---|---|---|---|

allowed_topics | array | List of allowed topics. | ["Product information", "Technical assistance"] |

forbidden_topics | array | List of forbidden topics. | ["politics", "religion"] |

threshold | number | Confidence threshold for topic detection. | 0.7 |

Factual Accuracy (content-factual-accuracy)

Validates that responses contain factually accurate information.

| Parameter | Type | Description | Defaults |

|---|---|---|---|

reference_facts | array | List of reference facts. | [] |

threshold | number | Confidence threshold for factuality assessment. | 0.8 |

evaluation_criteria | array | Criteria used to assess factual accuracy. | ["Contains verifiable information", "Avoids speculative claims"] |